Microsoft has apologised for racist and sexist messages generated by its Twitter chatbot. The bot, called Tay, was launched as an experiment to learn more about how artificial intelligence programs can engage with internet users in casual conversation. The programme had been designed to mimic the words of a teenage girl, but it quickly learned to imitate offensive words that Twitter users started feeding it. Microsoft was forced to take Tay offline just a day after it launched. In a blog post, the company said it takes "full responsibility for not seeing this possibility ahead of time."

Warren Buffett's Berkshire Hathaway posts record operating profit

Warren Buffett's Berkshire Hathaway posts record operating profit

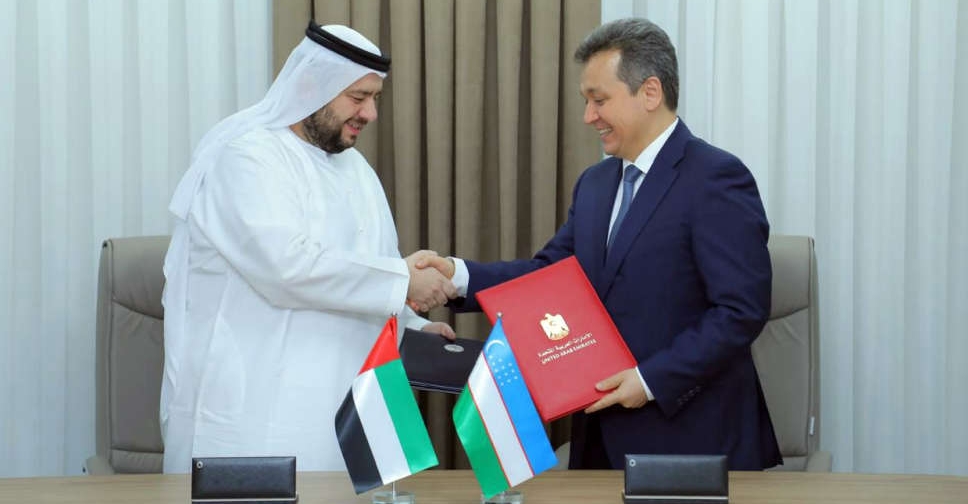

UAE, Uzbekistan sign digital infrastructure deal

UAE, Uzbekistan sign digital infrastructure deal

TECOM Group profit increases 15% on record high occupancy levels

TECOM Group profit increases 15% on record high occupancy levels

Sony and Apollo submit $26 billion Paramount offer, WSJ reports

Sony and Apollo submit $26 billion Paramount offer, WSJ reports

UAE, Iran expand cooperation in additional sectors

UAE, Iran expand cooperation in additional sectors